An Arizona State University professor is researching how to track malevolent social media bots by using a human touch.

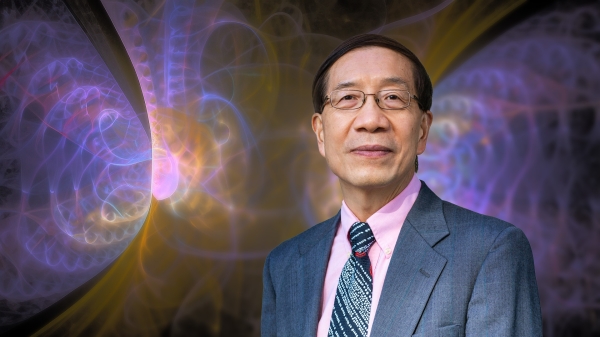

Victor Benjamin, who researches artificial intelligence, is looking at how people’s reactions to social media posts can be mined for clues on content that may be generated by automated accounts called bots.

“My co-author and I had this hunch that as bots are becoming more prolific on the internet, users are becoming more able to detect when something looks fishy,” said Benjamin, an assistant professor in the Information Systems Department in the W. P. Carey School of Business at ASU.

“Could we apply bot-detection technology and augment that by relying on human intelligence in the crowd responses?”

Benjamin's most recent paper on detecting malicious bots was published in PeerJ Computer Science, an open-access journal. He worked with Raghu Santanam, who holds the McCord Chair of Business in the Information Systems Department, and that research looked at how how the same fake news translated across various regional dialects may be detected using advanced linguistic models.

Bots are dangerous because they spread misinformation and conspiracy theories.

"This vaccine misinformation is scary stuff," he said, so finding a faster and better way of detecting bots is more important. That's why Benjamin decided to look at whether adding humans to the computer algorithms was more accurate.

He used the platform Reddit because it has posts and replies that lend themselves to looking at crowd reactions. The researchers wanted to weigh the level of certainty over people’s bot detection.

“You could have two users and one might say, ‘I think this is a bot’ and the other might say, ‘I know for sure this is a bot.’

“That’s the angle of our research — how to signal different certainties. You don’t want to blindly trust every crowd response.”

One factor that can hamper research is the lack of public data sets from social media platforms. Reddit is maintained by volunteer moderators who share lists among themselves of which accounts might be bots. The researchers collected those lists, then analyzed the crowd responses to posts from those accounts.

They applied “speech action theory,” a linguistic theory, to determine the level of certainty that someone found a bot.

“It’s a way to quantify the intended or underlying meaning of spoken utterances,” Benjamin said.

In the replies, the researchers looked at whether “bot” was mentioned, which was a strong indicator of accuracy in finding a bot. Sentiment, such as a strong negative reaction to the topic of bots, was a less important indicator.

The research, which is currently under review, found that analyzing the human reactions added to the accuracy of the computational bot-detection system.

It’s important to root out bots because they are a major source of misinformation spread on social media. Bots controlled by Russian trolls are a major driver of the lies that the 2020 election was stolen, researchers say. Last spring, Benjamin authored an op-ed column in the Boston Globe about how social media platforms need to step up the fight against misinformation.

“(Bots) hijack conversations on controversial issues to derail or inflame the discussion,” he wrote. “For example, bots have posed as Black Lives Matter activists and shared divisive posts designed to stoke racial tensions. When real people try to make their voices heard online, they do so within a landscape that’s increasingly poisoned and polarized by bots.”

Never before in human history have foreign governments been so successful in being able to target the populace of another country.

— ASU Assistant Professor Victor Benjamin

One way social media platforms can help curb bots is by releasing more data to their users, who could then decide whether a post is from a bot.

“We’re relying on public, open-source data — whatever the platforms make available to us. But the platforms have a lot of data they never reveal, such as how often users log in or how many hours their activity level remains continuous. A bot might have a 16-hour session,” he said.

“If you see someone posting very inflammatory messages, why can’t the platform reveal that data? ‘This user posts 300 messages a day, and they’re all inflammatory about America.’

“Or if there’s a hashtag that suddenly becomes popular on Twitter, Twitter has the metadata to show how that hashtag was formed. Was it organic growth or did it appear millions of times out of nowhere within a few minutes?”

No private data would need to be revealed.

“We just need metadata on usage of a hashtag or origin of country of where a hashtag is most frequently tweeted from.

“If it’s a Black Lives Matter tagline being tweeted from Russia, we should be suspicious.”

The problem is that it’s not in the social media platforms’ best interest to do anything that would decrease engagement, he said.

“The more a user engages, whether it’s a bot or not, the more it helps the value of the platform.

“If they say, ‘30 percent of users are bots,’ what does that do to the value of the platform?”

Scrutiny of social media platforms heightened during the divisive 2016 election.

“With Facebook, I’ll give them credit. They released data saying, ‘We noticed a lot of advertising paid for by Russian state agencies,’ ” Benjamin said.

“And Twitter put out a small grant for improving the conversational health of social media. They acknowledged some of their responsibility for maintaining the quality of online conversations, but they’re still not at the level we want them to be of releasing the metadata.”

Benjamin called the current state of affairs “an arms race” between bot authors and bot detection.

“Social media bots out in the wild today are always listening for new instructions from their owners. They can change their behavior in real time.

“If you have a static detection method, invariably the bots will learn to evade it.”

That’s why a system incorporating humans could be faster and more accurate. But the system would have to be adaptable, learning linguistic patterns from different languages.

The next frontier for bots — and using human detection — will be video.

“If you go on YouTube, there are now algorithmically generated videos that are completely generated by bots. It’s a lot of the same stuff — to spread misinformation and random conspiracies and so on,” he said.

The bots are creating narrative videos with unique content and music.

“Some are of low quality, but you wouldn’t be able to tell whether they are bot-generated or by someone who is new to creating videos,” he said.

One way to catch bot-generated videos is through applying the “theory of mind,” or the ability to consider how another person would see something — a perspective that is difficult for bot-generated content. For example, a human would align the visual and audio content in a video, but a bot might not.

“Where would a human content creator apply theory of mind that a bot might not, and how can we see those discrepancies?” Benjamin asked.

He said that vaccine misinformation amplified by bots is especially scary.

“Never before in human history have foreign governments been so successful in being able to target the populace of another country,” he said.

Top image courtesy of Pixabay.com

More Science and technology

Extreme HGTV: Students to learn how to design habitats for living, working in space

Architecture students at Arizona State University already learn how to design spaces for many kinds of environments, and now they can tackle one of the biggest habitat challenges — space architecture…

Human brains teach AI new skills

Artificial intelligence, or AI, is rapidly advancing, but it hasn’t yet outpaced human intelligence. Our brains’ capacity for adaptability and imagination has allowed us to overcome challenges and…

Doctoral students cruise into roles as computer engineering innovators

Raha Moraffah is grateful for her experiences as a doctoral student in the School of Computing and Augmented Intelligence, part of the Ira A. Fulton Schools of Engineering at Arizona State University…